I originally wrote this op-ed last winter for A Global Village, a student run journal on international affairs at Imperial College aimed at a non-specialist audience. The full issue can be found here. Obviously, the article is now a bit dated, and some figures are missing from this version (see original nicely formatted PDF).

Ranging from Iran's nuclear facilities to thousands of American diplomatic cables, recent high profile breaches of IT systems have highlighted the growing importance of cyber-security for this Information Age. Cyber-crime crosses national boundaries, and the issue is further exacerbated by the anonymity of attackers and the disproportionate potential for damage. While a notable problem in its own right, cyber-crime presages the inevitable conflicts that will arise from the close contact afforded by the Internet between varying cultural norms.

The advent of the Information Age has enabled unprecedented connectivity between not only individuals around the globe, but also connectivity across organizational scales. Large governments and corporations may quickly – and cheaply – directly reach and be reached by almost anyone with Internet access, as information transmission to even to non-networked systems is greatly facilitated by common software platforms used throughout the world. Such access, while beneficial to all, comes at a potential price.

Under Attack

Perhaps the most popularly reviled incarnation of cyber-crime comes in the form of malware. It is a rare computer user who hasn't at some point dealt with viruses, worms, Trojan horses, spyware, and other similar programs of their ilk (see table). These generally unwanted inhabitants of our IT systems, while personally devastating – as anyone who has lost data to particularly virulent malware can testify – are almost always undirected, causing damage, stealing information, and taking over computers throughout the computing world wherever improperly secured systems can be found.

Originally the province of hobbyists and academics, organized cyber-crime has, in the last decade, been the driver of much development in this field. However, the appearance of Stuxnet in mid-2010 suggests the growing involvement of national governments both as instigator and target of cyber-attacks. A very sophisticated computer worm, almost certainly requiring the efforts of multiple skilled programmers, Stuxnet appears to have targeted centrifuges in Iran's nuclear facilities thus delaying the uranium enrichment program. Given the lack of obvious commercial motive and the significant investment in the creation of Stuxnet, many people believe it to be the work of a Western power, possibly the United States or Israel, though no direct evidence has surfaced to indicate one way or the other.

More directed attacks against particular IT systems are most commonly seen against large, juicy targets like corporate servers, government databases, etc. These range from the technologically simple Distributed Denial of Service (DDoS), which involves simply overwhelming a server with junk traffic, slowing or even completely blocking legitimate traffic, to carefully crafted 'hacking' of data servers to steal information. Successful attacks can result in the exposure of important personal information, such as credit card numbers, for thousands of people or the crippling of Internet services; as we come to rely more and more upon the Internet for everything from communication to payment, the potential for damage only grows.

Very recently, highly publicized DDoS attack attempts have been made by 'hacktivists' against companies perceived to have crossed Wikileaks, such as Paypal, Visa, and Mastercard. Not long later, the self-styled group 'Gnosis' stole over a million user emails and encrypted passwords from Gawker Media, which runs a number of fairly popular websites. National governments are not immune either, as demonstrated by the defacement of Georgian government websites during the 2008 South Ossetia war.

Conversely, Google made a splash in early 2010 when it announced a pullout from operations in mainland China due to the hacking of Chinese human rights activists' Gmail accounts. Google, the American government, and the Western press generally level suspicion at the Chinese government, yet a concrete link was never made. Leaked American diplomatic cables suggest that these incidents were part of a more extensive network of cyber-attacks traced to hackers geographically located in China using Chinese-language keyboards.

As damaging as the immediate ramifications of critical infrastructure and data systems being under adversarial control may be, the ease of duplication and dissemination of information on the Internet, and the subsequent irreversibility of damage, only compounds the problem. There has been much in the news lately regarding the dissemination by Wikileaks of confidential American diplomatic cables, almost certainly leaked by someone authorized to access SIPRNet, a 'secure' classified network used by the US Department of Defense and Department of State. While Washington's response was perhaps of debatable justification, despite its best efforts, all of the leaked information is still online and will likely remain so.

Insecure by Design

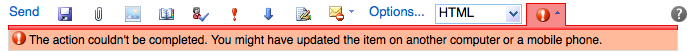

Why is it that the IT landscape proves so resistant to securing? One reason is that many of the network protocols and paradigms used in today's Internet date back to a bygone era in which it was reasonable to assume that no one was acting maliciously or deceptively. For instance, SMTP, the protocol by which nearly all email is sent, allows the sender to arbitrarily specify the 'from' address; similar types of spoofing are possible with other protocols, hiding the actual originator of a transmission. There are of course many proposed technical solutions to these sorts of issues, but the need to retain backwards compatibility, coupled with the uneven adoption of new protocols, has limited the success of such mechanisms.

Yet there exists an even more intractable issue: us, the general populace. As Internet access becomes both cheap and increasingly necessary for day-to-day affairs, a growing number of people have always-on high-speed connections. Unfortunately, this makes personal computers a target not only in their own right, but also for use in further cyber-crime. By assembling large teams of compromised computers, or 'botnets', consisting of 'zombie' computers, malicious agents are able to significantly increase not only their available network firepower for sending spam email or performing DDoS attacks but also may better cover their tracks – the attacks come directly from the systems of innocent but insufficiently security-conscious bystanders. It is thus impossible to identify the actual adversary in most cases.

While it is easy to blame naive end users for not securing their personal computers, adopting such an attitude is of little use. It is almost trite to refer to the annoying persistence of Windows Vista security prompts, but this exemplifies how willing people are to repeatedly trade minor intangible risks for more immediate concrete rewards. Although the results of individual security breaches at the personal level are relatively minor, except when in the aggregate form of botnets, similar patterns of behaviour by privileged users, for instance members of the armed forces, can have far greater immediate impact. Around the turn of the century, the virus Melissa was able to jump from the wider Internet to the American military's closed network after less than 24 hours, most likely due to a careless user who connected the same system to both networks. More recently, Stuxnet was designed to spread via USB memory sticks, easily hopping to the targeted Iranian centrifuge control computers, despite a rational effort to sequester them from the Internet.

Even where network connectivity is not involved, the mere existence of other similar systems provides vectors for malware transmission and a cloak of anonymity for the originator. If the Iranian computer systems had not been running the same software and operating system as thousands of other industrial plants, it would have been difficult for Stuxnet to spread as it did, hopping from plant to plant while solely activating its destructive payload on specific targets. In this scenario, a more directed attack would likely have been required, one which may have revealed the perpetrators.

The Great Leveller

Before the current Information Age, barring occasional exceptions, it was difficult for a single disaffected individual to acquire huge influence over the world or damage critical infrastructure without significant personal risk to life and limb. The Internet has changed all of that: a single person with moderate technical skills can, from the relative safety of his or her home, direct a botnet to temporarily cripple an e-commerce site through a DDoS attack, as was attempted against Visa, Mastercard, and Paypal following their decisions to suspend payments to Wikileaks.

Similarly, a single disaffected individual with access to classified information was able to make public thousands of diplomatic cables, in a manner that has proven exceedingly difficult for the US Government to suppress. Although the source may have been found and arrested in this particular case, the damage had already been done. With multiple copies of the data scattered on servers throughout the world and downloaded on individual computers, it is near impossible to prevent further dissemination.

Even something as complicated as Stuxnet, whose construction involved three zero-day exploits, two stolen security certificates, and detailed knowledge of the Programmable Logic Controllers used in industrial systems, could conceivably have been designed by a small group of vigilantes. Given the complexity of the operation, the deliberate targeting and the obvious motivators for a major Western power, this is a somewhat unlikely explanation. Indeed, a far scarier scenario would have been a malicious individual simply seeking to wreck havoc on industrial plants and using something similar but untargeted. It remains a fact that there is not, and unlikely ever to be, any direct evidence of involvement by a State.

Internet Without Borders

National governments have begun recognizing the challenges posed. Indeed, in the 2010 UK National Security Strategy review, 'hostile attacks upon UK cyber-space' is categorized as a Tier One priority risk. However, the dual issues of the lack of accountability and the disproportionate potential impact of single individuals present significant difficulties for governmental responses. While it has long been possible for covert operations agencies to achieve plausible deniability, most major operations could be reasonably understood not to be the work of individuals acting alone. This is no longer the case. How much of the hacking done from within Russia is actually sanctioned by Moscow? What is the appropriate response to a nearly untraceable attack like Stuxnet? Some nations have begun requiring the use of real names online, which if perfectly implemented could take away the anonymity that malicious parties hide behind. However, even ignoring the applicable free speech considerations, it is highly unlikely that any such system would work in practice, as technical systems are susceptible themselves to being mislead and zombie computers could still be used.

Another possible solution is for governments to hold others accountable for all hacking activity originating from within their countries. Indeed, in an analogous fashion, Beijing has already warned that they would hold Washington responsible for terrorist attacks conducted with the assistance of Google Earth, as the US Government has not complied with China's requests for Google to lower the image resolution of sensitive areas.

However, while it may be reasonable to state a priori that each nation should police within national borders, this is technically very difficult when it comes to the Internet; cyber-crime almost inherently crosses national boundaries. How, for example, should a government respond to cyber-warfare waged by a botnet primarily situated in Britain, responding to orders given through servers in Russia, and controlled by someone located in Brazil – assuming that the attack could be traced that far back in the first place? What share of the blame should the owners of the compromised British computers take for not having properly secured their systems? In Germany, Internet customers are personally liable if they do not properly secure their wifi networks, which are then used for illegal file sharing; unknowing participation in cyber-warfare would presumably be treated with more gravity.

Difficult as the jurisdictional and enforcement issues will be, an even thornier issue arises from the fact that citizens from various nations are in direct contact with the governments of others: the cultural norms and laws differ considerably from one nation to another. For example, much to the consternation of American free speech advocates, an English court claimed jurisdiction in the 2004 libel case surrounding the book Funding Evil – which was not published in the UK – based on the reasoning that 23 copies were purchased in England from online retailers and a chapter was made available on the Internet. Several American states have since passed laws specifically aimed at protecting against 'libel judgments in countries whose laws are inconsistent with the freedom of speech granted by the [US] Constitution'. What might happen to an American who aimed to disseminate information 'harmful to the spiritual, moral, and cultural sphere of' China, as the Shanghai Cooperation Organisation has chosen to define 'information war'? The United States would probably regard such dissemination of information as falling under freedom of speech, yet China may consider it an instance of cyber-terrorism; and, should the United States fail to take appropriate action, construe the American government's response as an act of cyber-aggression itself!

The Next Frontier

Cyber-space arguably represents the next frontier in the development of international relations, as nations cope with the challenges of being able to immediately and directly influence the infrastructure, culture, and the lives of people throughout the world, and more importantly, the possibility of being reciprocal to such influences. The cross-boundary nature of the Internet is beginning to come in conflict with the existence of differing national laws and cultural norms, spurred on by the obvious difficulties in dealing with cyber-security on a global stage. Thus, in parallel with technical and educational measures to enhance cyber-security, diplomatic norms will have to be altered to account for the powers afforded to individuals by the anonymity and interconnectedness of the Internet.